April 1, 2025

The Role of AI Due Diligence in Mergers & Acquisitions: What Investors Need to Know

Contents

When you’re evaluating a company for a potential acquisition, you’re not just looking at financials, teams, or market positioning anymore. You’re also looking at something much more complex and far less visible: artificial intelligence. And that’s exactly why AI due diligence needs to be on your radar from day one.

AI is everywhere now. It might be powering decisions behind the scenes, shaping the customer experience, or even running key parts of the business. It’s often right at the heart of what makes a company valuable. And that means the risks tied to that AI are baked in too.

This is why AI due diligence has become a critical part of modern Mergers & Acquisitions (M&A) strategy. It’s not just about checking that the technology works. It’s about understanding what risks come with it, whether it’s compliant with regulations like the EU AI Act, whether it behaves fairly, and whether it’s secure. If that sounds like a lot, that’s because it is.

Ignoring AI due diligence can lead to costly surprises after the deal closes. The AI might be legally exposed, ethically questionable, or technically unsound. But with the right questions and a structured approach, these risks can be uncovered early, giving investors a clearer view of what they’re actually acquiring.

Let’s take a closer look at what to focus on, what red flags to watch for, and how to build AI due diligence into your existing M&A playbook.

Why AI Due Diligence Matters

Companies are leaning into AI harder than ever. It helps them move faster, operate smarter, and differentiate themselves in competitive markets. For investors, this sounds great. AI can boost margins, automate costs, and open new product lines.

But here’s the problem. Not every company using AI is doing it responsibly. Some are moving fast and skipping critical steps. Others are outsourcing model development without oversight. And many simply don’t have mature AI governance structures in place.

So what does this mean in an M&A context?

It means the AI systems you’re acquiring may not be well-documented. They may be biased. They may be built on shaky data. They might even be violating laws that will go into effect next year.

Take this example. OpenAI’s video generation tool, Sora, was found to perpetuate biases by depicting stereotypical job roles and appearances. For instance, pilots, CEOs, and professors were predominantly portrayed as men, while women were shown in roles like flight attendants and receptionists. This highlights how AI can inadvertently reinforce societal biases if not properly audited for fairness.

If that model turns out to be biased or non-compliant with labor regulations, it becomes your problem the day the deal closes. That’s why due diligence on AI is so important. You’re not just evaluating technical performance. You’re evaluating legal exposure, brand risk, and operational reliability.

What to Evaluate During AI Due Diligence

So, how do you actually assess the AI components of a business?

You can’t just ask, “Is your AI working?” and move on. You need to dig into several key areas to understand what’s under the surface. These four are a good starting point.

1. Transparency and Explainability

Can the company explain how its AI models work? Can they show what data went into them, what assumptions they rely on, and how decisions are made?

If the team can’t explain the model in plain language, that’s a red flag. Not just because it’s hard to trust, but because many regulations now require explainability by law. And stakeholders (including customers, employees, and regulators) are expecting more transparency than ever.

You don’t need every detail about the algorithm. But you do need clarity on what the model is doing, how it’s doing it, and whether that process is accountable.

2. Data Governance

Every AI system is only as good as the data it’s built on. During due diligence, ask where the training data came from. Was it licensed properly? Was it ethically sourced? Does it include sensitive personal information that could trigger privacy regulations?

Also ask how the data is managed. Is it stored securely? Is it updated regularly? Can the company trace how data flows through its AI systems?

If they can’t answer these questions clearly, it might be a sign of poor governance and a risk to you post-acquisition.

3. Regulatory Compliance

Here’s the part that often gets overlooked.

The AI you’re buying has to meet local and global compliance standards. Depending on the industry and location, that could mean GDPR, HIPAA, or the EU AI Act. It could also include sector-specific rules around financial services, health tech, or hiring.

Look for documented impact assessments, risk classifications, and alignment with frameworks like NIST’s AI Risk Management Framework or ISO 42001 standard. If those don’t exist, be prepared to build them from scratch or face regulatory exposure later.

4. Ethical and Social Risks

This might sound abstract, but it’s not. Ethical AI is increasingly tied to real-world consequences.

A model that reinforces discrimination, makes unfair decisions, or can’t be audited will draw attention from regulators, advocacy groups, and the media. Even if it’s unintentional, the fallout can be serious.

Ask how the company thinks about fairness, bias, and harm (discrimination in hiring, misidentification in facial recognition, reinforcement of stereotypes, exclusion from services). Look for evidence that they test models for these issues and that they have clear policies in place.

If the team shrugs or says “We haven’t had a problem so far,” that’s not confidence. That’s avoidance.

Risks to Watch For

So what are the most common warning signs? Here’s a sample of things that should prompt you to slow down and look closer.

- AI models that can’t be explained or audited

- Data sources that are unclear, outdated, or third-party with vague terms

- No formal compliance processes for AI systems

- Lack of internal AI policies or governance committees

- Evidence of bias or performance gaps across key user groups

- Overdependence on one or two external AI vendors

- Security concerns around model access or deployment infrastructure

Any one of these could delay integration, damage reputation, or even trigger penalties after the deal closes. In some cases, they might be deal-breakers. In others, they can be managed with the right plan. But either way, you need to know about them early.

4 Best Practices for Investors and Deal Teams

Alright, so what can you actually do to reduce risk during the M&A process?

Here are a few steps that help make AI due diligence more structured and reliable:

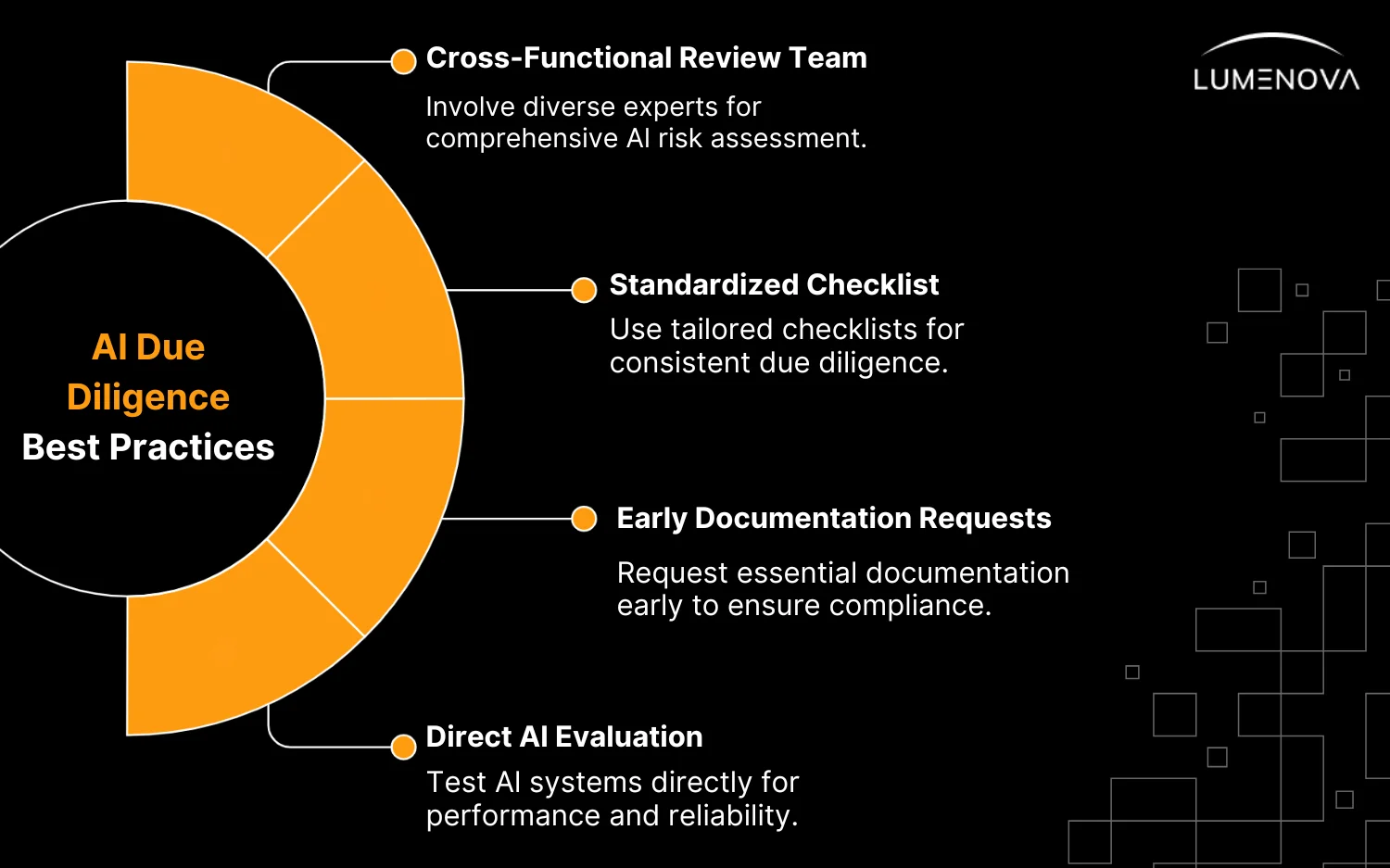

1. Build a Cross-Functional Review Team

Don’t leave this to legal or IT alone. You need input from data scientists, risk experts, compliance officers, and ethics specialists. AI risk is multidimensional, and your diligence process should reflect that.

2. Use a Standardized Checklist

Create a repeatable framework you can apply across deals. Include questions about data sourcing, model explainability, regulatory status, and ethical testing. Use industry frameworks where possible, but tailor them to your business and the deal type.

3. Request Documentation Early

Ask for model cards, performance reports, data usage policies, audit logs, and risk assessments. These shouldn’t be optional. If the company can’t produce them, it might mean they don’t exist or haven’t been maintained.

4. Don’t Just Take Their Word For It

If the AI is a core part of the product or revenue stream, consider testing it. Have your technical team evaluate it directly. Check for performance under edge cases. Look for signs of drift. Evaluate user impacts. It takes more time, but it’s worth it.

Want to learn more about AI risks in business deals? Read our simple guide on Enterprise AI Governance. It shows you how to spot problems, follow rules, and make sure AI is safe and fair (before and after a deal is done).

How Lumenova AI Helps with M&A Due Diligence

This process doesn’t have to be painful. Lumenova AI helps investors and risk leaders build structured, reliable AI due diligence processes without slowing down the deal.

With pre-built templates, customizable risk frameworks, and automated evaluation tools, we make it easier to:

- Identify AI risks across models and systems

- Check compliance against EU AI Act and other regulations

- Detect bias, drift, and transparency gaps

- Streamline documentation and reporting for stakeholders

- Confidently move forward with AI-heavy deals

By embedding Responsible AI into your M&A workflow, you reduce exposure and gain clarity before integration begins.

Questions to Think About

- Do your current M&A checklists cover AI risks?

- How do you know the AI you’re buying is legal and fair?

- Can the company clearly explain how their AI works?

- Where did the training data come from (and is it clean and legal)?

- Who’s in charge of AI risk at the company?

- What happens if the AI makes a bad or biased decision?

- Would you still buy the company if no one can explain the AI?

Conclusion

AI has transformed how M&A deals are done. What was once a simple IT check is now a full strategic review. As a result, companies that recognize this shift early are better positioned to avoid surprises, reduce risk, and protect long-term value.

That’s why, if AI is part of the deal, AI due diligence must be part of the plan. More importantly, it can’t just be a checkbox. Instead, it should be a clear process, supported by the right tools and guided by smart questions.

Fortunately, it doesn’t have to be complicated. With the right approach, teams can identify risks early, ask better follow-ups, and move forward with confidence.

Request a custom demo to see how Lumenova AI supports investors who don’t want to be caught off guard after closing.